Quick Start

Prefer deploying to the cloud?

Starting a Zep server locally is simple.

1. Clone the Zep repo

2. Add your OpenAI API key to a .env file in the root of the repo:

Important

Zep uses OpenAI for chat history summarization, intent analysis, and, by default, embeddings. You can get an Open AI Key here.

3. Start the Zep server:

This will start a Zep server on port 8000, and NLP and database server backends.

Secure Your Zep Deployment

If you are deploying Zep to a production environemnt or where Zep APIs are exposed to the public internet, please ensure that you secure your Zep server.

Review the Security Guidelines and configure authentication. Failing to do so will leave your server open to the public.

4. Get started with the Zep SDKs!

- Install the Python or Javascript SDKs by following the SDK Guide.

- Looking to develop with LangChain or LlamaIndex? Check out Zep's LangChain and LlamaIndex support.

Docker on Macs: Embedding is slow!

For docker compose deployment we default to using OpenAI's embedding service.

Zep relies on PyTorch for embedding inference. On MacOS, Zep's NLP server runs in a Linux ARM64 container. PyTorch is not optimized to run on Linux ARM64 and does not have access to MacBook M-series acceleration hardware.

Want to use local embeddings? See Selecting Embedding Models.

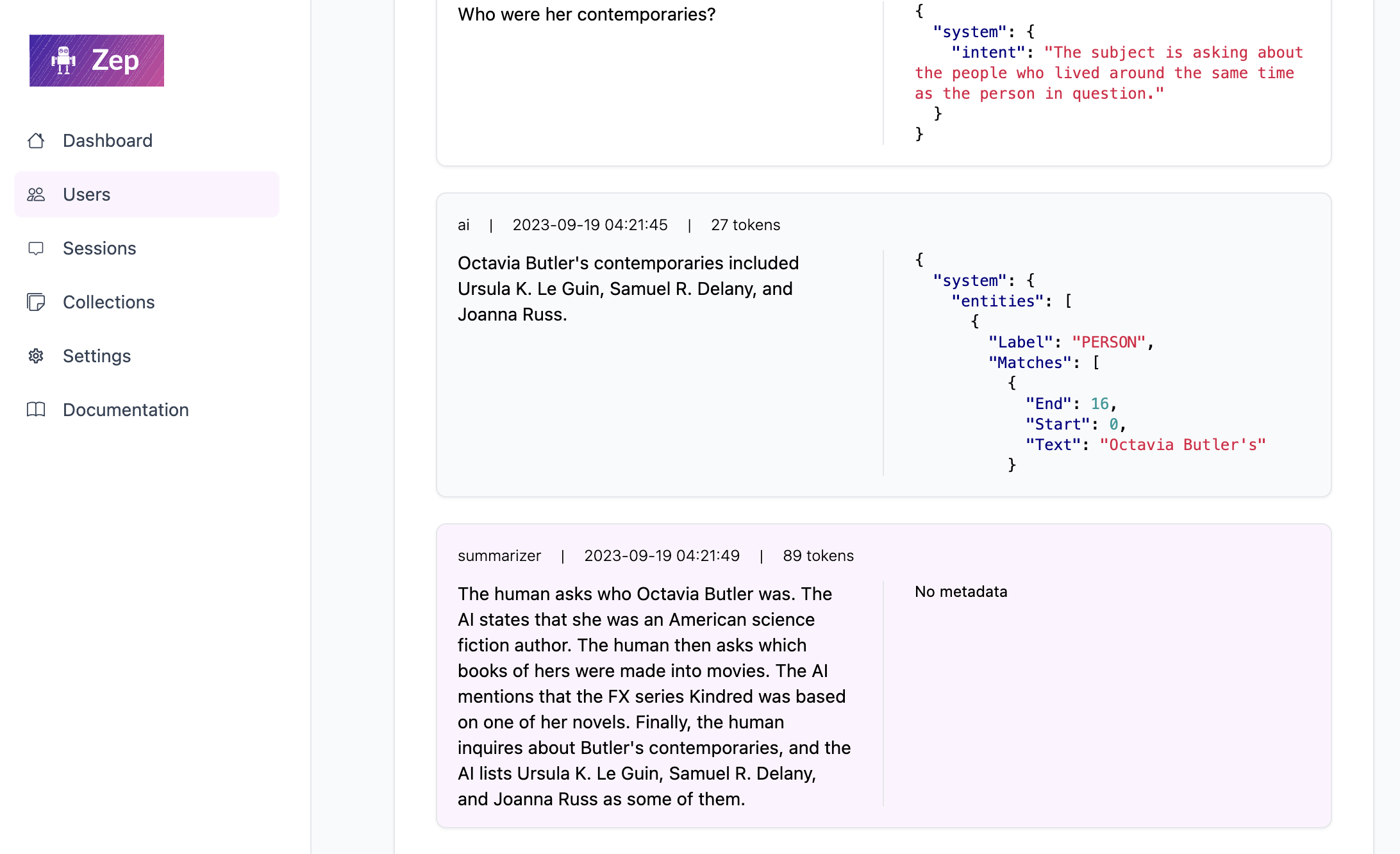

5. Access the Zep Web UI at http://localhost:8000/admin (assuming you are running Zep locally)

Next Steps

- Setting up authentication

- Developing with Zep SDKs

- Learn about Extractors

- Setting Zep Configuration options

- Learn about deploying to production